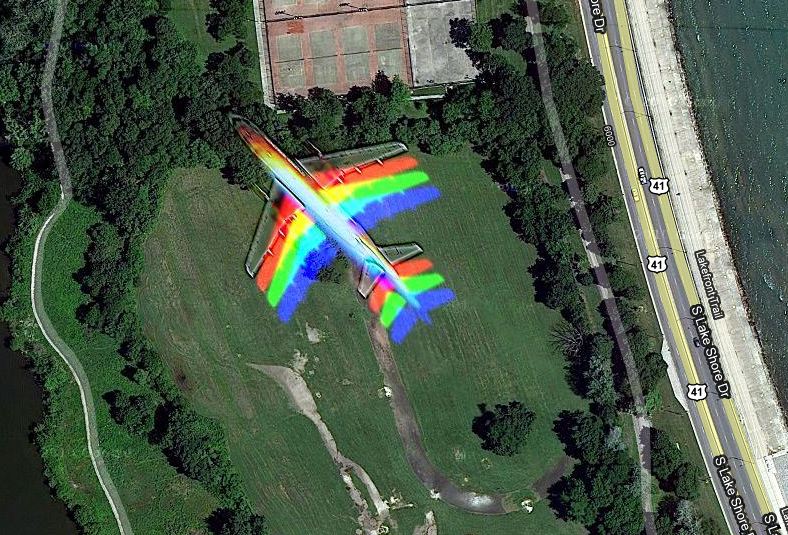

What happens when a plane flies through a Google Maps satellite photo? TheAtlantic.com has the answer:

Vagabondish is reader-supported. When you buy through links on our site, we may earn a small affiliate commission. Read our disclosure.

See for yourself. Geekosystem.com notes that “the rainbow effect could have been caused by a satellite imaging process that consisted of “several colored exposures [taken] over a few seconds and then combined”; failing that, unicorn magic.”